The Thinking Machine Review — Jensen Huang and the AI Empire He Built

From dishwasher to CEO of a $4.5 trillion company. The CUDA lock-in and the double-edged monopoly hiding behind Jensen Huang's success story. How long can the NVIDIA empire last in the age of AI?

The Thinking Machine Review — Jensen Huang and the AI Empire He Built

From dishwasher to CEO of a $4.5 trillion company. The CUDA lock-in and monopoly lurking behind the success story

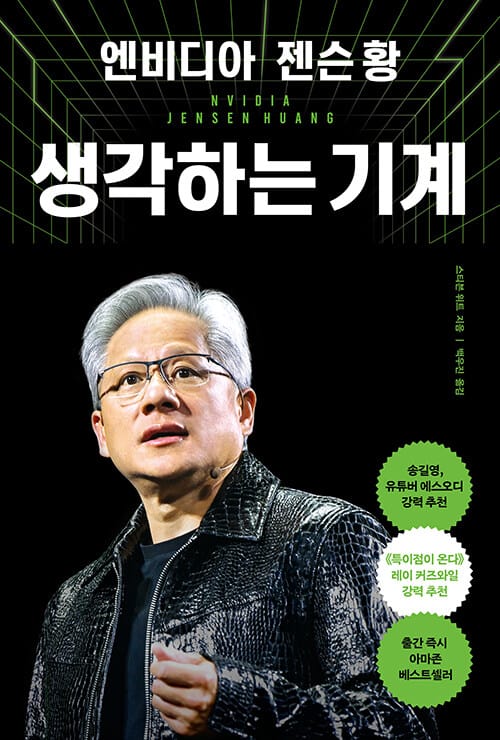

A $4.5 Trillion Company That Started with Dirty Dishes

In 1970s Oregon, a young Asian-American boy was washing dishes at a Denny's restaurant. Twenty years later, he walked back into that same Denny's and founded a company with two engineers over breakfast. By February 2026, that company's market cap had hit $4.5 trillion — the first in history to breach the $5 trillion mark.

That company is NVIDIA. That man is Jensen Huang.

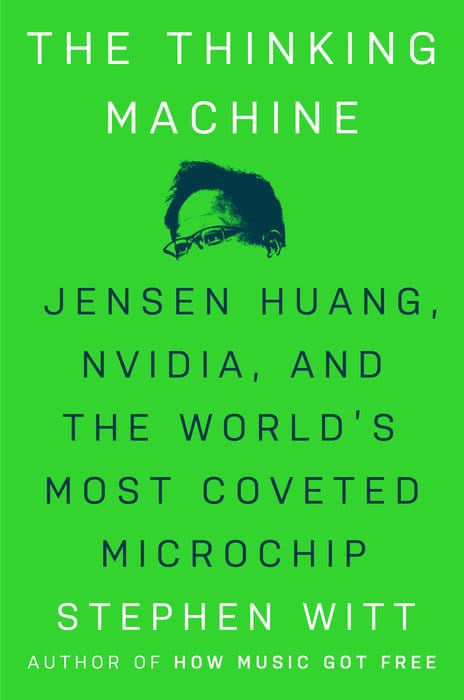

Stephen Witt's The Thinking Machine tells this remarkable story. Published in May 2025, it immediately won the FT and Schroders Business Book of the Year Award and was named one of The Economist's Books of the Year.

Reading it now, in February 2026, feels somewhat different from what the author intended. A lot has changed since the book's narrative ends in mid-2024. Chinese startup DeepSeek trained a GPT-4-class model using just one-tenth the NVIDIA chips anyone thought necessary. AMD has announced a chip it claims delivers a "1,000x performance leap." Google, Amazon, and Meta are all pouring serious capital into developing their own AI silicon.

Yes, NVIDIA controls roughly 80% of the AI chip market — but how long can that hold? This book is a story of triumph, yet read from today's vantage point, the cracks in the monopoly come into sharper relief than the author perhaps intended.

Rather than summarizing the book chapter by chapter, I want to focus on the questions it raises for readers navigating the AI landscape in 2026.

What the Book Gets Right — and What It Misses

The Core Story: How a Video Game Company Became an AI Empire

NVIDIA launched in 1993 as a maker of graphics cards for gaming — a niche market in an era when 3D graphics were a novelty. Jensen Huang had one conviction driving everything: GPUs would eventually be inside every computer on earth.

In the early 2000s, NVIDIA created CUDA, and that changed everything. CUDA gave developers a way to program GPUs using standard languages like C and Python, turning what had been a black box accessible only to graphics specialists into a general-purpose computing platform.

Nobody foresaw that this would become the infrastructure of an AI revolution. When deep learning researchers in the early 2010s started training neural networks on GPUs, they found them hundreds of times faster than CPUs — and the only GPU ecosystem that made that easy was NVIDIA's, thanks to CUDA.

Witt traces this arc in impressive detail: how Huang convinced Wall Street, how NVIDIA survived multiple near-death experiences, how shareholders pushed back when he poured hundreds of millions into CUDA with no obvious short-term payoff. He has a genuine gift for translating hardware and software into prose a general reader can follow — from what parallel processing actually means to how large language models work. That alone makes the book worth reading.

Where the Book Falls Short: CEO Hagiography and a Missing Two Years

The problem is that The Thinking Machine leans too heavily on Jensen Huang as protagonist. NVIDIA's success reads almost like a one-man show, when in reality it was shaped by timing, market forces, thousands of engineers, and a fair amount of luck.

More consequentially, the critical distance is thin. When Witt asked Huang directly about the dangers of AI, Huang reportedly refused to engage and grew visibly angry. The book mentions this in passing — and then moves on. The structural questions are mostly absent: what it means for a single company to control 90% of AI chip supply, and whether that kind of market power inevitably distorts prices and stifles competition.

The book also ends in mid-2024, which is a real limitation. The world has moved considerably since then.

The 2026 Reality: Cracks in the Monopoly

The DeepSeek Shock and the Efficiency Revolt

In January 2025, Chinese startup DeepSeek dropped a bombshell: it had built a state-of-the-art reasoning model using roughly one-tenth the NVIDIA chips that the industry assumed were necessary. This landed at a moment when the conventional wisdom was that you needed thousands of H100s to compete at the frontier — and that wisdom suddenly looked shaky.

There's likely some exaggeration in DeepSeek's claims. But the message was unmistakable: "you have to use NVIDIA or you can't compete" was no longer a given.

Big Tech's Push for Independence

Google unveiled its seventh-generation TPU, "Ironwood," capable of linking 9,216 chips into a single system. Meta is exploring TPU adoption. Amazon and Microsoft are each committing billions to custom AI chip design.

Why the sudden urgency? Cost is part of it, but the bigger driver was supply. In early 2026, NVIDIA cut production by 40% due to HBM (high-bandwidth memory) shortages. AI startups were routinely waiting six months to get their hands on GPUs. When your core infrastructure depends on a single vendor and that vendor can't deliver, it stops being a vendor relationship and starts being a strategic liability.

AMD's Counterattack: Real Threat or Marketing?

At CES 2026, AMD previewed its MI500 series with the headline claim of "1,000x performance improvement" over the MI300X. Honestly, that number strains credulity — a genuine 1,000x leap would require rewriting the laws of physics. It's almost certainly a benchmark figure cherry-picked for a specific workload.

That said, AMD is making real gains. Its 2025 AI chip revenue hit $4.5 billion, more than doubling year-over-year. OpenAI is actively testing AMD hardware.

AMD's real advantage is price. Where an NVIDIA H100 runs around $30,000, AMD's MI300X lands closer to $20,000. If the performance gap is narrow enough, that price difference is compelling. The ROCm open-source software platform is also improving steadily.

The obstacle remains the ecosystem. PyTorch, TensorFlow, JAX — the major AI frameworks are all optimized for CUDA. Switching to AMD means rewriting code and accepting performance uncertainty. Most developers aren't willing to take that risk, which is the real moat NVIDIA is defending.

The Competitive Landscape: NVIDIA vs. AMD vs. Intel (2026)

| Category | NVIDIA | AMD | Intel |

|---|---|---|---|

| Market Share | 80%+ | 5–10% | <5% |

| 2025 AI Chip Revenue | $26.3B | $4.5B | Undisclosed |

| Key Advantage | CUDA ecosystem, full-stack solutions | Price competitiveness, ROCm open-source | 18A process technology |

| Latest Product | Vera Rubin Superchip | MI500 Series | Panther Lake |

| Performance Claim | 5x inference vs. Blackwell | 1,000x vs. MI300X (?) | High-efficiency AI PC chip |

| Weakness | 40% production cut due to HBM shortage | Thin ecosystem, adoption risk | Unclear data center roadmap |

| Key Partnership | Mercedes-Benz (autonomous driving) | OpenAI | Gaming-focused |

| 2026 Outlook | Stable #1, monopoly starting to erode | Gaining share | Uncertain |

The bottom line: NVIDIA remains the dominant player by a wide margin. But "permanent monopoly" is no longer the right frame for 2026.

CUDA Lock-In: Why Developers Can't Leave

The most important insight in the book is this: NVIDIA's moat isn't its hardware — it's its ecosystem.

CUDA launched in 2007. Nearly twenty years later, millions of developers have built their work on top of it. PyTorch and TensorFlow were designed with CUDA as a first-class assumption. University AI curricula are built around it. Stack Overflow has hundreds of thousands of CUDA-related questions and answers.

No matter how good AMD's chips get, if ROCm isn't as stable and well-documented as CUDA, developers won't make the switch. Migrating means rewriting code, accepting unfamiliar failure modes, and giving up a massive support community.

Think of it like messaging apps. Telegram might have better features than WhatsApp — but if everyone you know is on WhatsApp, you're not going anywhere. The value of a platform grows with its user base, and NVIDIA has had twenty years to build that base. This is the network effect working at full strength, and it's a far stickier moat than any hardware specification.

The book emphasizes CUDA's importance but stops short of naming it for what it also is: a deliberate lock-in strategy. It was framed as developer-friendliness. In practice, it became a barrier to competition that no rival has yet managed to tear down.

What Jensen Huang Gets Right — and Where to Be Careful

"Always Act Like You're 30 Days from Bankruptcy"

This is one of Huang's most-quoted lines, and he still says it today as the CEO of the world's most valuable chipmaker. In his telling, the secret to sustaining thirty years at the top is never letting go of that sense of urgency.

NVIDIA's history bears this out. The company nearly died in the early 2000s when AMD was beating it on GPUs. Its mobile chip business failed in the 2010s. Each time, Huang converted the crisis into a pivot. The lesson isn't paranoia for its own sake — it's that complacency is the thing most likely to kill a successful company, and that urgency has to be manufactured even when everything looks fine.

Intellectual Honesty: The Culture of Naming Failure

One scene in the book stands out. After a major project failure, Huang gathered the entire company and said, plainly: "We were wrong. Here's why." No hedging, no blame-shifting.

This is harder than it sounds, especially in corporate cultures where failure gets buried and responsibility diffuses into the org chart. The instinct in most companies — to manage the narrative, to protect the team, to wait and see if it blows over — is exactly what Huang refuses. He treats transparent failure analysis as a competitive advantage. You learn faster when you don't spend energy pretending you didn't stumble.

The Humility of the Dishwasher

Even as CEO of a multi-trillion-dollar company, Huang reportedly pushes in chairs after meetings and cleans whiteboards himself. He insists there's no such thing as work beneath you.

This could be theater. But the underlying philosophy is real: judge work by its value, not by its status. An intern and a VP deserve the same respect as human beings. In a startup especially, where everyone does everything, that orientation matters enormously. It's the opposite of the organizational ego that kills agile teams.

The Trap: Don't Mistake the Legend for the Lesson

Here's where I'd urge some caution. The Thinking Machine can easily be read as a hagiography, and hagiographies are dangerous because they flatten complexity into a single hero.

NVIDIA's success was not made by Jensen Huang alone. It emerged from thousands of engineers, from fortuitous timing, from market shifts nobody could have predicted, and from a considerable amount of luck. The Silicon Valley "visionary founder" mythology — Jobs, Musk, Huang — obscures the teams, systems, and cultures that do the actual work.

Read this book asking "what can I learn about how NVIDIA built its systems?" rather than "how do I become Jensen Huang?" The latter question leads nowhere useful.

The Monopoly's Shadow: Questions the Book Won't Ask

Did NVIDIA's Dominance Accelerate AI — or Slow It Down?

The book declines to answer this directly. But anyone reading in 2026 has to grapple with it.

The case for NVIDIA: by pouring hundreds of millions into CUDA and continuously optimizing GPUs for AI workloads, NVIDIA pulled the entire field forward faster than a fragmented market might have. Would AMD or Intel have made those bets? Probably not at the same scale.

The case against: with 90% market share comes unchecked pricing power. An H100 costs $30,000. That's not a number a small team can absorb. The barrier to entry for AI experimentation went up precisely because there was no meaningful competition on price. And the supply crunch of 2024–2025 — when startups routinely waited six months for GPUs — meant good ideas sat idle because the infrastructure wasn't available. That's a real cost to the pace of innovation.

If AMD, Intel, and Google TPUs had been genuine competitors, prices would have come down. Supply would have been more distributed. The field might have diversified in interesting directions. CUDA lock-in foreclosed that possibility.

Witt doesn't engage with these questions seriously. He spent too much time inside NVIDIA's orbit to maintain the distance they require.

Where Will NVIDIA Be in 2030?

The AI chip market is projected to reach $295 billion by 2030. Will NVIDIA still hold 80% of it?

My guess: no. I'd expect the share to settle somewhere in the 50–60% range, for three reasons.

1. Big Tech's in-house chips: Once Google, Amazon, and Meta are running their own silicon at scale for internal workloads, their dependence on NVIDIA drops sharply.

2. The efficiency revolution: If the DeepSeek model of "do more with less" continues to mature, the total volume of chips required per unit of AI output shrinks — which compresses the entire addressable market.

3. AMD's steady climb: As ROCm matures and price sensitivity grows, AMD can realistically carve out 10–15% share without requiring anyone to fully abandon CUDA.

None of this means NVIDIA is in danger of collapse. It will almost certainly still be the market leader in 2030, and its absolute revenue will likely be higher than today. The word "monopoly" just won't apply anymore.

Who Should Read This Book

Read It If You Are...

An AI startup founder or developer

If GPU costs are a constant headache, this book explains exactly why NVIDIA is the only real option right now — and what it would take for that to change. It sharpens your thinking about infrastructure decisions and gives you a framework for evaluating AMD, ASICs, and other alternatives as they mature.

Considering NVIDIA as an investment

The stock has already run hard, and the valuation is hard to justify on conventional metrics. But this book makes the CUDA moat concrete in a way that most analyst reports don't. Understanding what sustains the lock-in — and what could erode it — is directly relevant to any position sizing decision. (Don't treat any book as investment advice, but this one is genuinely informative.)

A business leader interested in technology strategy

Huang's approach to crisis management, intellectual honesty, and sustained urgency is worth studying regardless of your industry. "Maintain the fear even when you're winning" is easier said than done, and the book shows what it looks like when someone actually does it over thirty years.

Skip It (or Supplement It) If You Are...

New to tech

Terms like GPU, CUDA, parallel processing, and HBM come up constantly. Witt explains them well, but without any prior context, the learning curve is steep. Come in with at least a basic understanding of how AI training works.

Looking for a critical account

This book is fundamentally sympathetic to its subject. The monopoly pricing concerns, the supply chain failures, the question of whether CUDA lock-in has harmed competition — none of these get serious treatment. Read it alongside more adversarial sources if you want a complete picture.

Rating: ★★★★☆ (4/5)

Why One Star Short

1. Excessive CEO focus: NVIDIA's success gets attributed too heavily to one person. The structural and systemic factors that made the company what it is receive too little attention.

2. No real critique of the monopoly: A 90% market share brings pricing power, supply constraints, and competitive suppression. The book treats these as footnotes rather than central questions.

3. The missing two years: Ending in mid-2024 means the DeepSeek shock, the AMD MI500, and the full-scale push by Big Tech into custom silicon are all absent. For a reader in 2026, parts of the book already feel like history.

Why It's Still Worth Reading

No other book explains as clearly how infrastructure shapes innovation — how CUDA became the foundation that an entire industry was built on, and what that means for the companies and developers who now depend on it. If you want to understand how technology monopolies actually form and persist, this is the essential case study.

Read it skeptically. Keep the question of structural impact front and center, not just "isn't Jensen Huang impressive." That's the reading that will actually improve how you think about the AI market in 2026.

A Final Thought: Anyone Can Wash Dishes

Jensen Huang washed dishes and became the CEO of the world's most valuable chip company. It's a great story. But the actual lesson isn't "anyone can make it to the top."

The lesson is that he was already preparing for the next step while he was doing the menial work. Humble enough to take the job seriously. Ambitious enough not to let it be the whole story. Honest enough to admit failure when it came. Paranoid enough never to assume the ground was stable.

This book captures the triumph of that disposition. What it leaves for the reader to supply is the honest reckoning with what that triumph cost — and whether the empire it built can hold.

The AI chip wars are just getting started. NVIDIA may well still be on top in 2030. But the fact that Jensen Huang still talks about being thirty days from bankruptcy? That's not a quirk. It's a warning to everyone watching from the outside.

References

- NVIDIA Market Cap News — Global Economic

- GPU Market Share Statistics — Ruliweb

- Jensen Huang on Intellectual Honesty — The Scoop

- Humble Leadership — Fortune Korea

- Jensen Huang's Management Philosophy — Magazine Hankyung

- The Thinking Machine — Penguin Random House

- Book Review — Unite.AI

- Essential NVIDIA Backstory — Cultr

- Book Reviews — The Week

- AI Chip Market Stats — PatentPC

- CES 2026 Chip Wars — Introl Blog

- The Move Away from NVIDIA — Ajunews

- AI Chip Competitor Analysis — Tech42

- Korean Memory Suppliers Benefiting — The Public